Dashcam Video Shows Dangerous Situation

Modern cars are capable of impressive things with minimal driver intervention. They can brake, accelerate, and stay in their lane on the highway independently. However, the true test for any driver assistance system is how it behaves in non-standard or dangerous situations. Sometimes these systems fail, which can lead to serious incidents.

Incident at a Railway Crossing

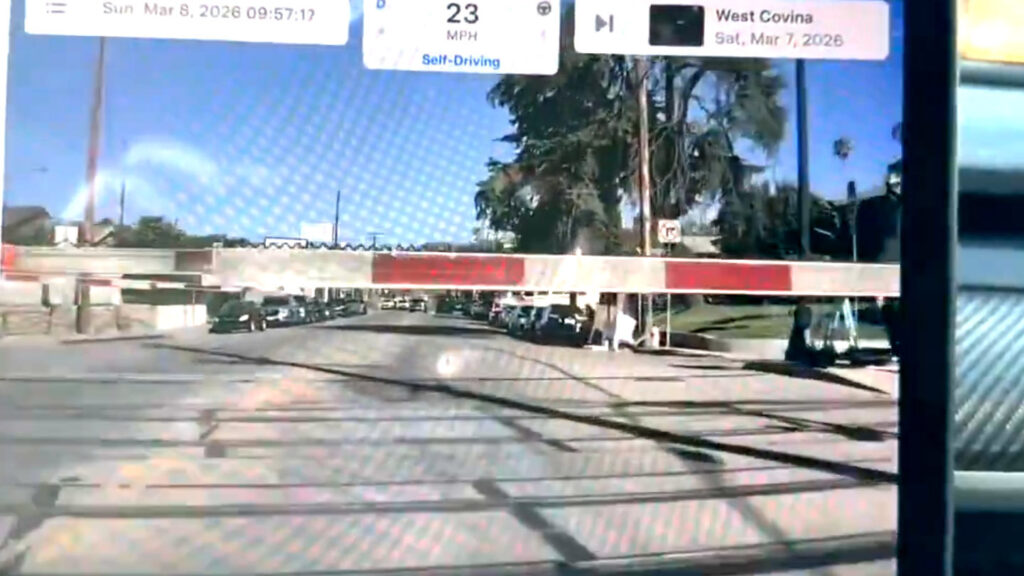

One Tesla appears to have suffered just such a significant failure. As seen in a video first published on social media, the car approaches a railway crossing at the moment the barriers begin to lower. Despite the obvious obstacle, the car, according to the video, continues moving and drives through the first barrier arm.

The driver seems to realize the danger only at the last moment, otherwise braking occurs too late. As a result, the car stops right on the tracks. Fortunately, a quick press of the accelerator allows it to drive through the second barrier arm and leave the tracks before the train arrives.

Online Reaction and Disappearance of Information

The original social media post where this video was published had the title “Tesla FSD almost killed me today”. However, this post was later deleted, making it difficult to verify key details of the event. It becomes impossible to accurately determine the car’s hardware or the version of the “Full Self-Driving” (FSD) software system that was active at the time of the incident.

“Tesla FSD almost killed me today” Watch @Tesla FSD smash through a railroad crossing barrier at 23mph as a train approaches! @ElonMusk your defective software is putting innocent lives at risk. FSD should be banned from public roads.

Why Did the System Fail?

This case looks particularly problematic for a system called “Full Self-Driving”. Tesla’s camera-based approach is designed to analyze visual information from the environment and react accordingly. In this situation, the system seems to have ignored some of the most obvious signals on the road: flashing lights and the physical obstacle of a lowered barrier.

It is important to remember that despite the name, FSD is classified as a Level 2 driver assistance system. This means the person behind the wheel remains fully responsible for monitoring the road situation and intervening in a timely manner. In the video, the driver applies the brakes, but this happens only after colliding with the obstacle. It remains unclear whether the driver was simultaneously pressing the accelerator, or if other factors influenced the situation.

Context of Regulatory Scrutiny

Without additional information and due to the deletion of the original post, the full context of the incident may remain unclear. However, it is obvious that such an event occurs at an extremely unfortunate time for Tesla. The company is under investigation by the U.S. National Highway Traffic Safety Administration regarding the performance of the FSD system. Furthermore, Tesla is actively working on deploying its Robotaxi network of driverless taxis. Similar incidents, where the system fails to handle obvious danger, do not add confidence either to the technology or to the company’s plans.

This case is a reminder of the fundamental challenges facing developers of autonomous systems. Even the most sophisticated algorithms can fail under unforeseen circumstances, especially when it comes to interpreting complex visual signals in stressful situations. So far, the technology is not capable of fully replacing human attention and instincts, especially when lives are at stake. The railway crossing incident is a clear signal to both manufacturers and regulators that the path to truly autonomous transport is still long and requires extremely careful testing and refinement of safety systems. Public debate about the permissible limits of using such systems on public roads is likely to intensify after videos like this.